Nếu bạn là người đã or trước đây chịu trách nhiệm việc kiểm tra và phân tích nhật ký hệ thống log trong hệ thống Linux bạn sẽ thấy đó là một cơn ác mộng khi mà phải theo dõi nhiều dich vụ đồng thời,

Trước đây nhiệm vụ đó phải thực hiện thủ công,và mỗi lại bản ghi phải được xử lý riêng. May mắn thay với sự kếp hợp của logstash,kiabana,elasticsearch ở phía server và filebeat ở phí client làm cho công việc trở nên dễ dàng như đi bộ,

Ba thành phần đầu tiên tạo thành bộ ELK,có nhiệm vụ thu thập các bản ghi từ nhiều máy chủ cùng một lúc.

Một giao diện web dựa trên java cho phép bạn kiểm tra các bản ghi một cách nhanh chóng trong nháy mắt và khắc phục sự cố dễ dàng hơn.Các bản ghi được gửi từ các client to server trung tâm bởi Filebeat, có thể được mô tả như một đại lý vận chuyển log.

Chúng ta hãy xem làm thế nào các thành phần này kết hợp với nhau để làm việc,

Mô hình:

- Central Server: Centos7 : 192.168.0.29

- Client #1: Centos7 - 192.168.0.100

- CLient #2: Debian - 192.168.0.101

Cài đặt bộ ELK trên Server.

Chúng ta hãy bắt đầu cài đặt bộ ELK trên Server.

- Elasticsearch: Lưu trữ các log được gửi đến từ Clients.

- Logstash: Xử lý các log này.

- Kiabana: Cung cấp giao diện web giúp chúng ta phân tích và kiểm tra các bản ghi nhật ký.

Cài đặt các gói dưới đây trên Central Server.

Đầu tiên chúng ta cài JDK version 8, đậy là sự phụ thuộc của các thành phần ELK,

- yum -y update

- wget --no-cookies --no-check-certificate --header "Cookie: gpw_e24=http%3A%2F%2Fwww.oracle.com%2F; oraclelicense=accept-securebackup-cookie" "http://download.oracle.com/otn-pub/java/jdk/8u131-b11/d54c1d3a095b4ff2b6607d096fa80163/jre-8u131-linux-x64.rpm"

- rpm -Uvh jre*.rpm

- java -version

Enable Elasticsearch Repository

1. Import the Elasticsearch public GPG key to the rpm package manager:

# rpm --import http://packages.elastic.co/GPG-KEY-elasticsearch

2.Insert the following lines to the repository configuration file

elasticsearch.repo:

3. Install the Elasticsearch package.

4. Start and enable the service.

5. Allow traffic through TCP port 9200 in your firewall:

6. Check if Elasticsearch responds to simple requests over HTTP:

Make sure you complete the above steps and then proceed with Logstash. Since both Logstash and Kibana share the Elasticsearch GPG key, there is no need to re-import it before installing the packages.

Enable Logstash Repository

7. Insert the following lines to the repository configuration file

logstash.repo:

/etc/yum.repos.d/logstash.repo

8. Install the Logstash package:

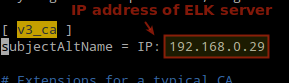

9. Add a SSL certificate based on the IP address of the ELK server at the the following line below the

[ v3_ca ] section in /etc/pki/tls/openssl.cnf:

10. Generate a self-signed certificate valid for 365 days:

11. Configure Logstash input, output, and filter files:

Input: Create

/etc/logstash/conf.d/input.conf and insert the following lines into it. This is necessary for Logstash to “learn” how to process beats coming from clients. Make sure the path to the certificate and key match the right paths as outlined in the previous step:

/etc/logstash/conf.d/input.conf

Output (

/etc/logstash/conf.d/output.conf) file:

/etc/logstash/conf.d/output.conf

ilter (

/etc/logstash/conf.d/filter.conf) file. We will log syslog messages for simplicity:

/etc/logstash/conf.d/filter.conf

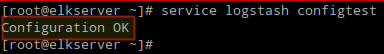

13. Start and enable logstash:

14. Configure the firewall to allow Logstash to get the logs from the clients (TCP port 5044):

Enable Kibana Repository

14. Insert the following lines to the repository configuration file

kibana.repo:

/etc/yum.repos.d/kibana.repo

15. Install the Kibana package:

16. Start and enable Kibana.

17. Make sure you can access access Kibana’s web interface from another computer (allow traffic on TCP port 5601):

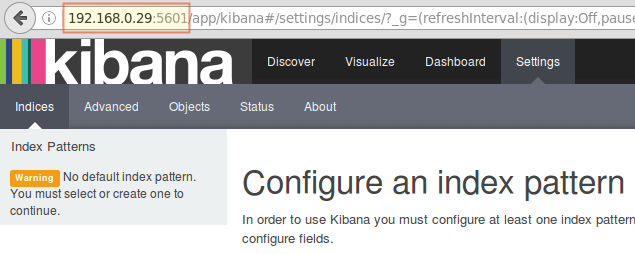

18. Launch Kibana (

http://192.168.0.29:5601) to verify that you can access the web interface:

We will return here after we have installed and configured Filebeat on the clients.

Install Filebeat on the Client Servers

We will show you how to do this for Client #1 (repeat for Client #2 afterwards, changing paths if applicable to your distribution).

1. Copy the SSL certificate from the server to the clients:

2. Import the Elasticsearch public GPG key to the rpm package manager:

3. Create a repository for Filebeat (

/etc/yum.repos.d/filebeat.repo) in CentOS based distributions:

/etc/yum.repos.d/filebeat.repo

4. Configure the source to install Filebeat on Debian and its derivatives:

5. Install the Filebeat package:

Configure Filebeat

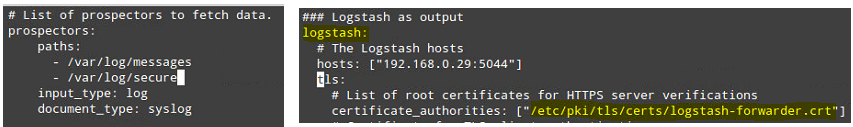

A word of caution here. Filebeat configuration is stored in a YAML file, which requires strict indentation. Be careful with this as you edit

/etc/filebeat/filebeat.yml as follows:- Under paths, indicate which log files should be “shipped” to the ELK server.

- Under prospectors:

- Under output:

- Uncomment the line that begins with logstash.

- Indicate the IP address of your ELK server and port where Logstash is listening in hosts.

- Make sure the path to the certificate points to the actual file you created in Step I (Logstash section) above.

The above steps are illustrated in the following image:

Save changes, and then restart Filebeat on the clients:

Once we have completed the above steps on the clients, feel free to proceed.

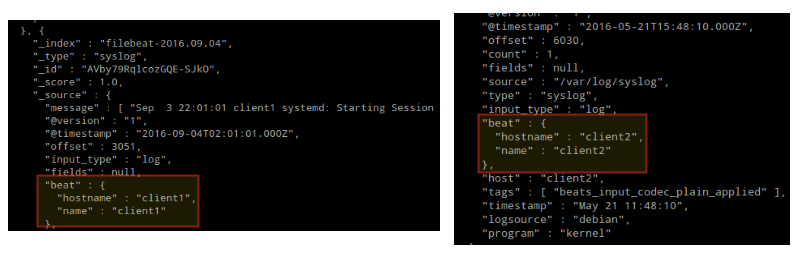

Testing Filebeat

In order to verify that the logs from the clients can be sent and received successfully, run the following command on the ELK server:

The output should be similar to (notice how messages from /var/log/messages and /var/log/secure are being received from client1 and client2):

Otherwise, check the Filebeat configuration file for errors.

after attempting to restart Filebeat will point you to the offending line(s).

Testing Kibana

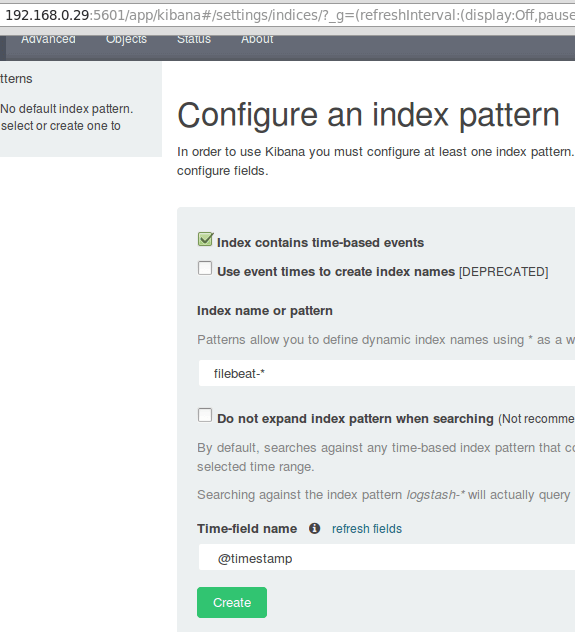

After we have verified that logs are being shipped by the clients and received successfully on the server. The first thing that we will have to do in Kibana is configuring an index pattern and set it as default.

You can describe an index as a full database in a relational database context. We will go with

filebeat-*(or you can use a more precise search criteria as explained in the official documentation).

Enter

filebeat-* in the Index name or pattern field and then click Create:

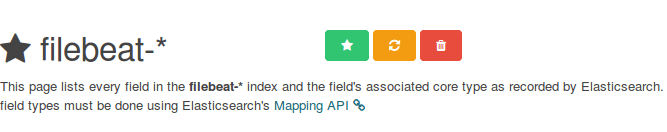

Please note that you will be allowed to enter a more fine-grained search criteria later. Next, click the star inside the green rectangle to configure it as the default index pattern:

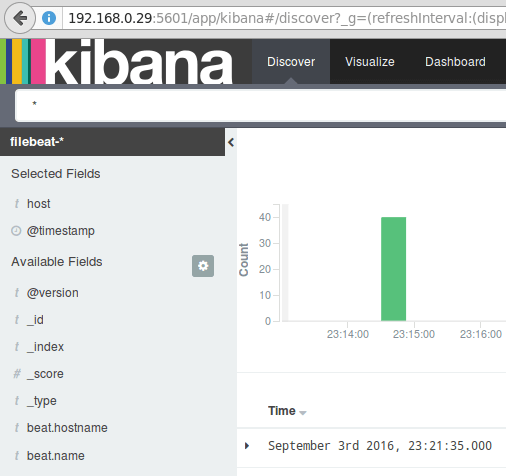

Finally, in the Discover menu you will find several fields to add to the log visualization report. Just hover over them and click Add:

The results will be shown in the central area of the screen as shown above. Feel free to play around (add and remove fields from the log report) to become familiar with Kibana.

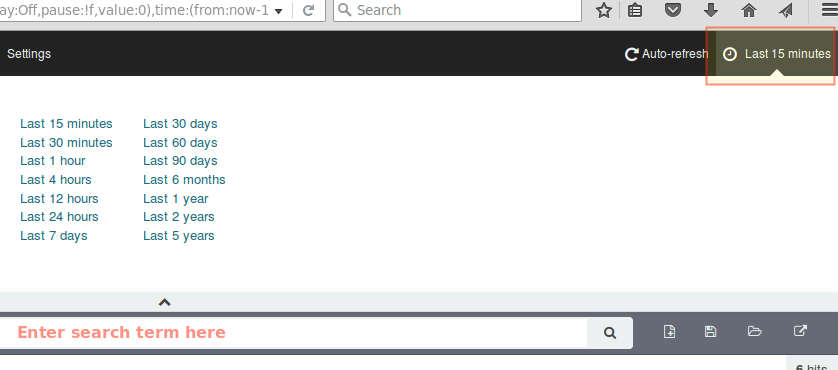

By default, Kibana will display the records that were processed during the last 15 minutes (see upper right corner) but you can change that behavior by selecting another time frame:

Summary

In this article we have explained how to set up an ELK stack to collect the system logs sent by two clients, a CentOS 7 and a Debian 8 machines.

Now you can refer to the official Elasticsearch documentation and find more details on how to use this setup to inspect and analyze your logs more efficiently.

If you have any questions, don’t hesitate to ask. We look forward to hearing from you.

a